How Long Does Backlink Indexing Take in 2026?

How Long Does Backlink Indexing Take in 2026?

You have built genuine links, watched analytics like a hawk, and hit refresh on Search Console more times than you care to admit. Then the question arrives: how long until those backlinks actually get indexed and counted?

Short answer: it varies wildly. Some links turn up in days, especially on strong, frequently crawled sites. Others appear after weeks. A fair share never show at all. That variability is normal, not a sign that your campaign has stalled.

What indexing actually means

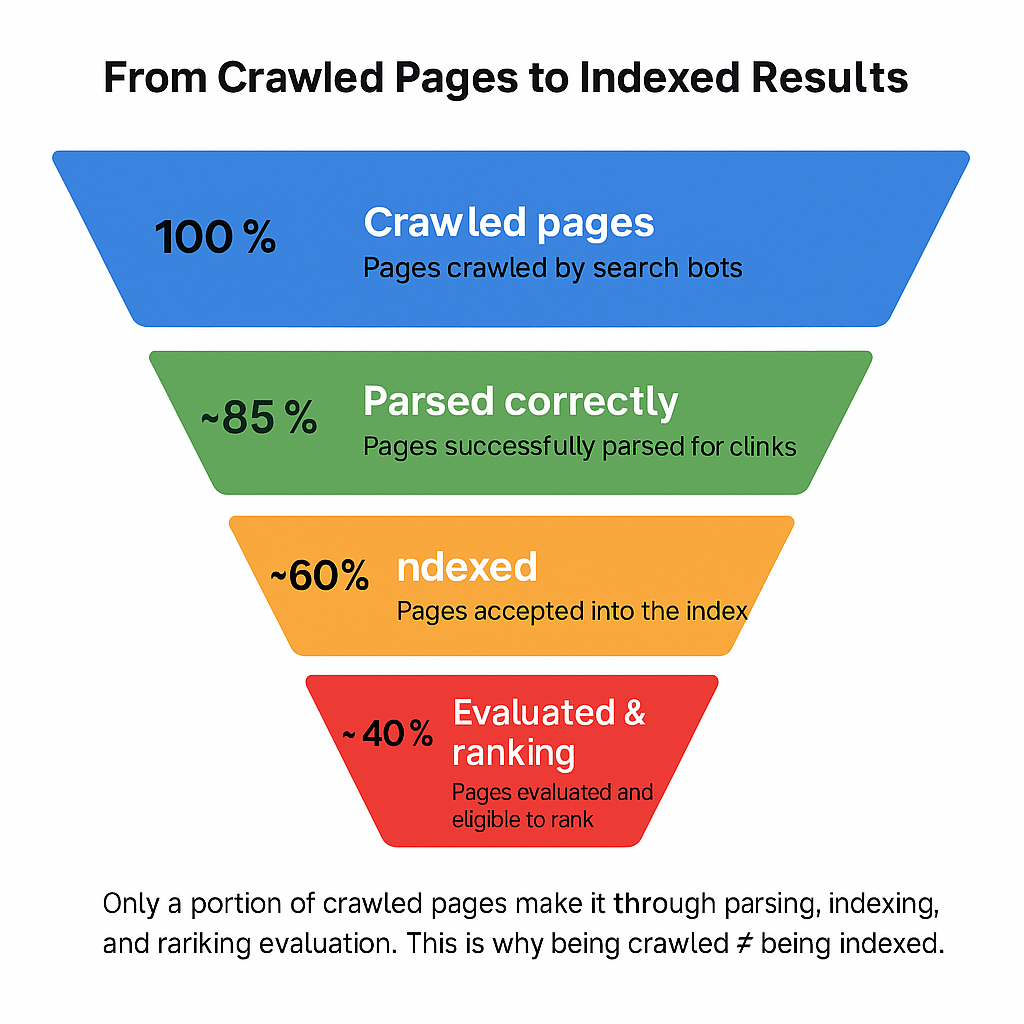

Indexing is not the same as a bot simply visiting a page. A crawler might fetch the page, parse the HTML, then decide whether to store it and the links it contains. Only once the page is in the index, and the link is considered valid, can it influence your visibility.

Crawl, parse, index, evaluate. Each step can add delay. The schedule is driven by the source page, not your site, which is why the same backlink type can be found quickly in one case and much later in another.

how long does backlink indexing take?

Across large sets of data, a mid-range average sits near the 10 week mark for new links to appear in Google’s systems. That number hides a split: links on high-authority, frequently updated domains are often found in days to a couple of weeks, while links on quiet or new sites can linger for months.

Bing runs a similar playbook, with one twist. If you or the linking site submit URLs through Bing Webmaster Tools, discovery can happen very quickly. Without submissions, the same dynamics apply as with Google: strong sources are seen faster, obscure pages much slower.

Here is a practical view of timeframes you can plan around.

| Backlink context | Typical Google indexing time | Notes |

|---|---|---|

| Dofollow on a high-authority, fresh site | Hours to days, often under 1 to 2 weeks | Major news, popular blogs, and active category leaders are crawled frequently |

| Dofollow on a moderate-authority site | About 1 to 4+ weeks | Averages hover near 10 weeks across mixed profiles |

| Dofollow on a low-authority or new site | Several weeks to months | Infrequently crawled pages may remain unseen |

| Nofollow link on any site | Often not indexed or counted | Treated as a hint, with much lower consistency |

| Public social post containing a link | Usually discovered quickly | Helps discovery of the target page rather than passing value |

What speeds things up, what slows things down

Crawl frequency is the lever that matters most. If Googlebot visits the linking page daily, your link can be seen within a day or two. If the page is checked every few months, you will wait. The pace depends on the site’s history, freshness, internal link structure, and server responsiveness.

Authority is the other key ingredient. Search engines assign more crawl budget to sites with strong link profiles and consistent quality. A deep, unloved subpage on a weak domain will sit in the queue, while a front-page article on an established publication will be checked many times.

Context matters. Editorial links within the main body of an article are discovered more reliably than links tucked into comments, old directory listings, or thin press-release farms. Technical rules can block discovery entirely. A robots.txt disallow, a meta robots noindex or nofollow, or a login wall will prevent bots from following your link.

Here are the signals that tend to accelerate discovery and indexing:

- Source freshness: Pages that update often get recrawled promptly.

- Internal prominence: Links on pages close to the homepage are fetched more often.

- Crawlability: Clean robots rules, no blocked resources, fast load times.

- Structured discovery: The linking URL appears in an XML sitemap.

- Engagement: Traffic and social shares encourage bots to revisit a page.

After accounting for the positives, some simple blockers explain most delays:

- Nofollow on the link or page

- Robots.txt disallow on the directory

- Meta robots noindex or nofollow

- Slow, unstable hosting on the source site

- Deep pagination with weak internal links

- Low-authority domain with infrequent updates

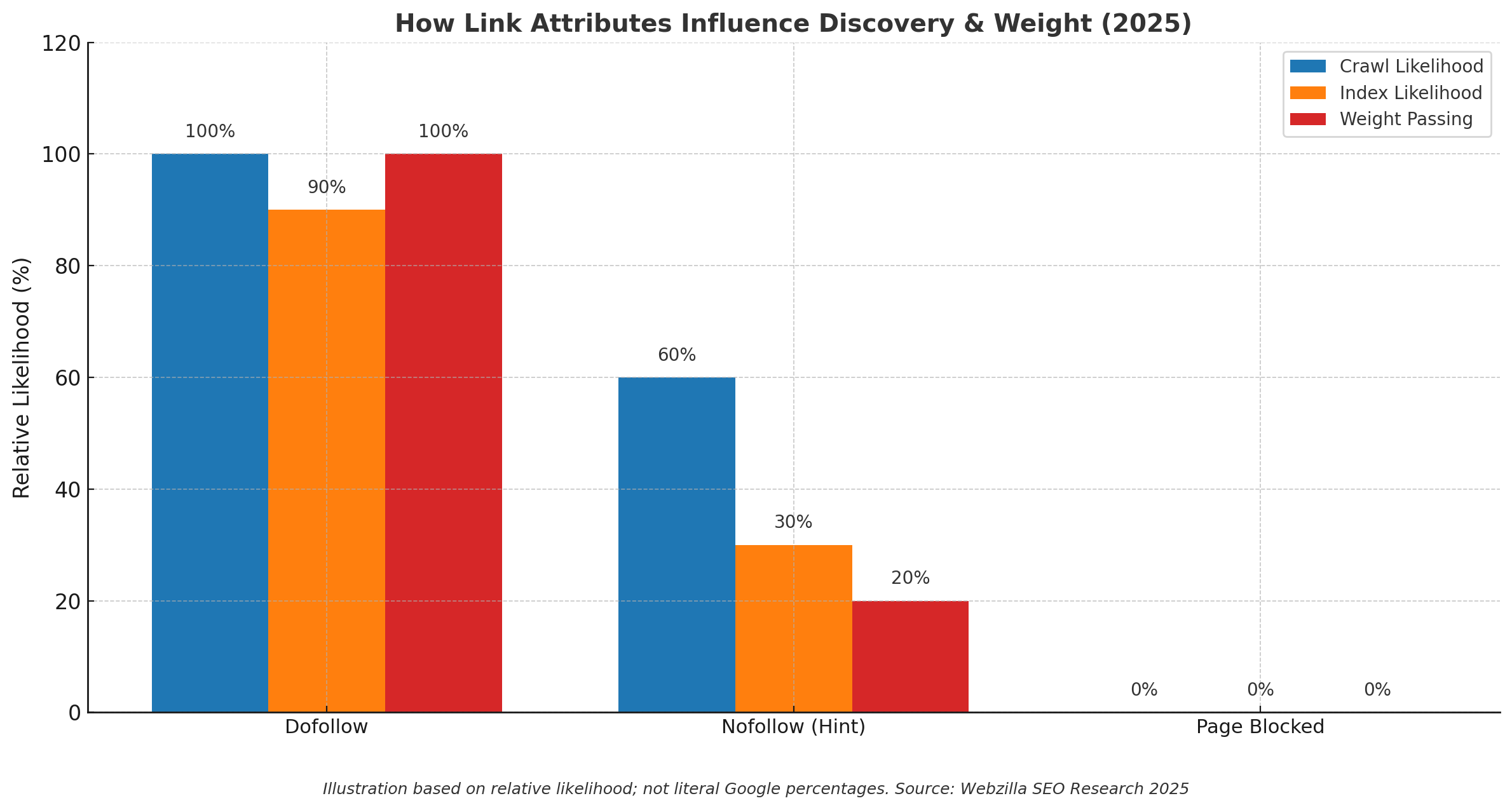

Dofollow, nofollow, and why the attribute still matters

In 2020 Google shifted nofollow to a hint. That change did not turn nofollow into a reliable signal. It simply means Google may choose to crawl or ignore the link. In practice, a dofollow link is far more likely to be found and considered quickly. A nofollow link on a high-profile page might be crawled for discovery, but it is less likely to carry weight.

Also consider page-level controls. If a page carries a meta robots nofollow, none of its links will be followed. If the domain blocks the path in robots.txt, crawlers will not visit the page in the first place. When a valuable backlink sits on a page with restrictive directives, indexing can stall indefinitely.

Google, Bing, and friends

Google depends on its crawling systems to find links. The cadence varies by URL. Some pages are hit daily, others every few months. That is why two links placed on the same day can have very different fates.

Bing offers a practical advantage through URL Submission. If you control the source site, or have a rapport with the publisher, submitting the linking page can bring it into view much faster. DuckDuckGo reflects Bing’s index for a large portion of results, so improvements there carry across. Yandex and Baidu run separate systems with their own priorities and regional focus.

How to verify a link has been picked up

Start with Google Search Console. Use the URL Inspection tool on the page that contains your link. If it shows as on Google, the content is in the index, which is step one. You can request indexing to prompt a recrawl. The Links report in Search Console is another useful datapoint. It does not list every link, but when it shows your new referring domain, you know Google has at least seen links from that source.

Bing Webmaster Tools offers similar capability. Inspect the linking page, and if needed, submit the URL. The Backlinks report will confirm what Bingbot has found for your site once it processes updated data.

Third-party tools fill gaps. Ahrefs, SEMrush, Moz, and Majestic each maintain their own link graphs. If one of these crawlers has picked up your backlink, the page is likely accessible and unblocked. If they have not seen it after a few weeks, that hints at a crawl or access issue on the source side. These tools also give you first seen dates to benchmark your expectations.

You can also run manual checks through search operators. Search for a unique slice of the anchor text in quotes combined with site:source-domain.com. Check Google’s cached copy of the linking URL, if available, to confirm the page version Google has stored is the one that contains your link.

A practical pacing guide for expectations

Think in three waves. First, a discovery wave that may occur within days for links on fresh, prominent pages. Second, a consolidation wave over the first month where a mix of moderate-authority placements start to appear. Third, a long tail where slower or lower-quality sources turn up, if at all, across two to three months.

This rhythm helps reporting. Instead of asking why a link from a small community blog is not in your reports after 10 days, you can plan that link to surface in the long tail and treat it as a bonus when it does.

What you can do to encourage faster pickup

You cannot force a search engine to index a page or link. You can, however, remove friction and invite faster visits. Focus your effort on the environment around the link and the discoverability of the page that hosts it.

- Get the source page into a sitemap: Ask the publisher to include the new URL in their XML sitemap, and verify the sitemap is listed in robots.txt.

- Check crawl directives: Confirm no robots.txt disallow is blocking the path, and that the page does not carry a noindex or nofollow meta tag.

- Improve internal links: Ensure the linking page is linked from a crawlable menu, hub page, or a recent article so it is not orphaned.

- Prompt recrawls where allowed: Use Google’s URL Inspection request and Bing’s URL Submission on the linking page.

- Boost early engagement: Share the linking page on social, reference it from your own blog, and send legitimate traffic that encourages recrawls.

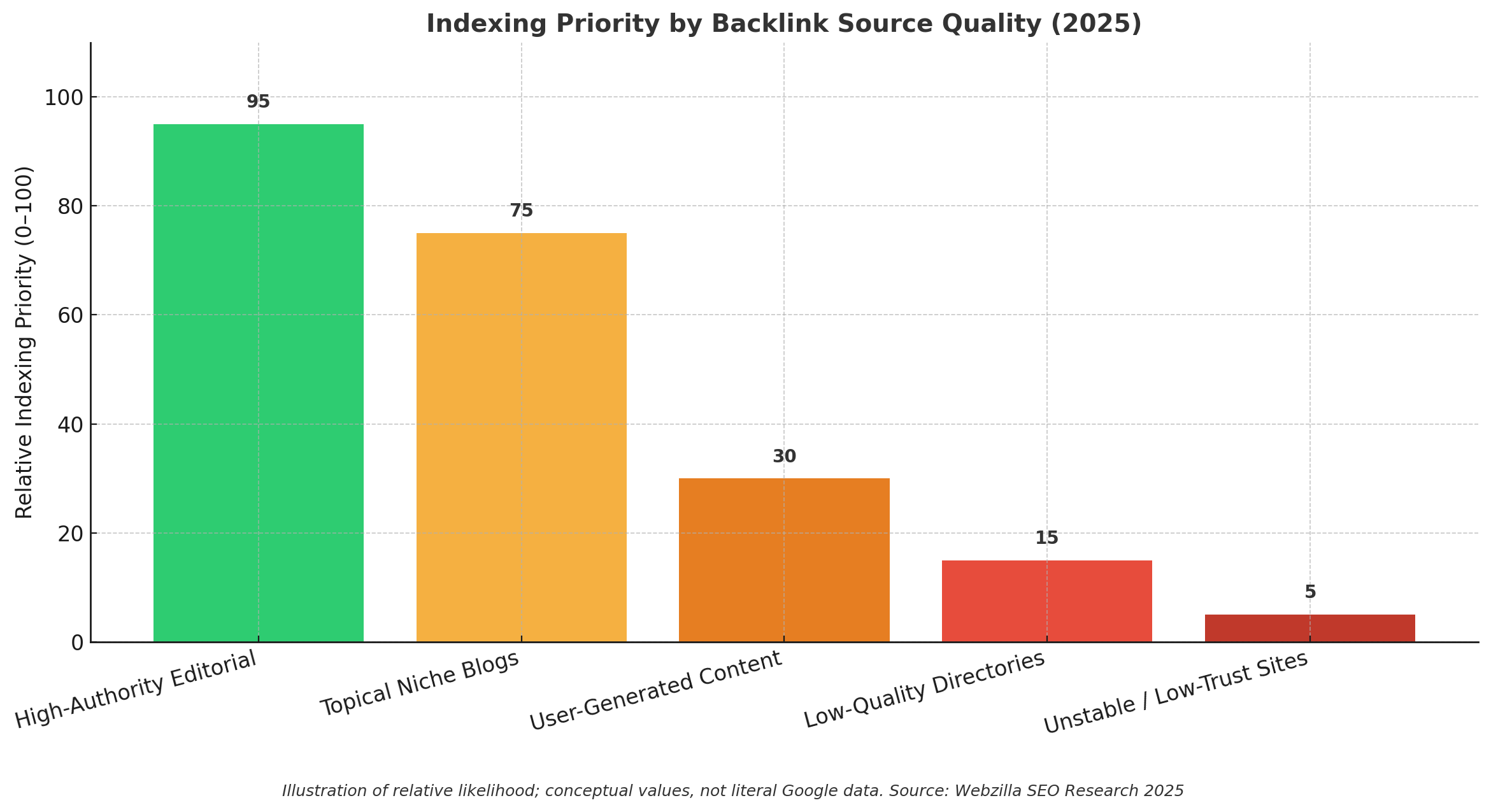

The quality of the source still wins

Not all backlinks deserve the same patience. Editorial links from trusted, well-linked publications get priority and will usually be counted quickly. User-generated links on thin pages, low-quality directories, and sites with unpredictable hosting are often overlooked or deferred.

If a high-value editorial link remains unseen after a few weeks, it is worth a polite check with the publisher. Verify the page is published to the live site, indexed, linked internally, and free of restrictive tags. Small fixes on their side often resolve the delay.

A 90‑day playbook you can repeat

The first month sets the tone. Confirm crawlability on each high-value linking page and prompt indexing requests where appropriate. Track which domains appear in Search Console’s Links report and which show up in your preferred SEO tool. Expect about a third of quality links to be visible somewhere within two weeks.

Month two is where many moderate-authority placements surface. Revisit any outliers, particularly pages that remain unindexed. If you find issues like noindex tags or orphaned URLs, ask the publisher to address them. Social sharing of those pages can help spark recrawls.

Month three is clean-up and consolidation. By now most strong sources will have landed. Anything still missing is either blocked, very low priority, or gone. Treat this as your audit window and refine your outreach patterns to favour placements on pages that are clearly integrated into the host site.

After you have that rhythm, use this short checklist to keep momentum:

- Prioritise sites with regular updates and strong internal linking

- Request inclusion of new URLs in the publisher’s sitemap

- Inspect linking pages for crawl blocks before and after publication

- Submit the linking URLs in Bing, and request indexing in Google

- Track first seen dates across Search Console and a third-party crawler

- Review unindexed outliers at the 6 to 8 week mark and request fixes where needed

Why patience, paired with good systems, pays

Indexing speed is a function of the web around your link. When you favour quality sources, make the host page easy to find, and use the official tools to prompt reasonable recrawls, the line from publication to impact shortens. Some links will still take their time, and a few will never appear. That is fine.

Stay focused on the placements that combine editorial relevance with strong crawl signals. Your graph of new links discovered over 90 days will start to look steadier, and your reports will match the real progress you are making.